Introduction

There are a lot of questions about AI’s capabilities these days amongst the general populace. Does it Reason? Can it Plan? Is it just for code generation? But here’s the most important question of all that should be asked, the thing that actually determines whether you have a useful tool or a genuine thinking partner: Does it have a good memory?

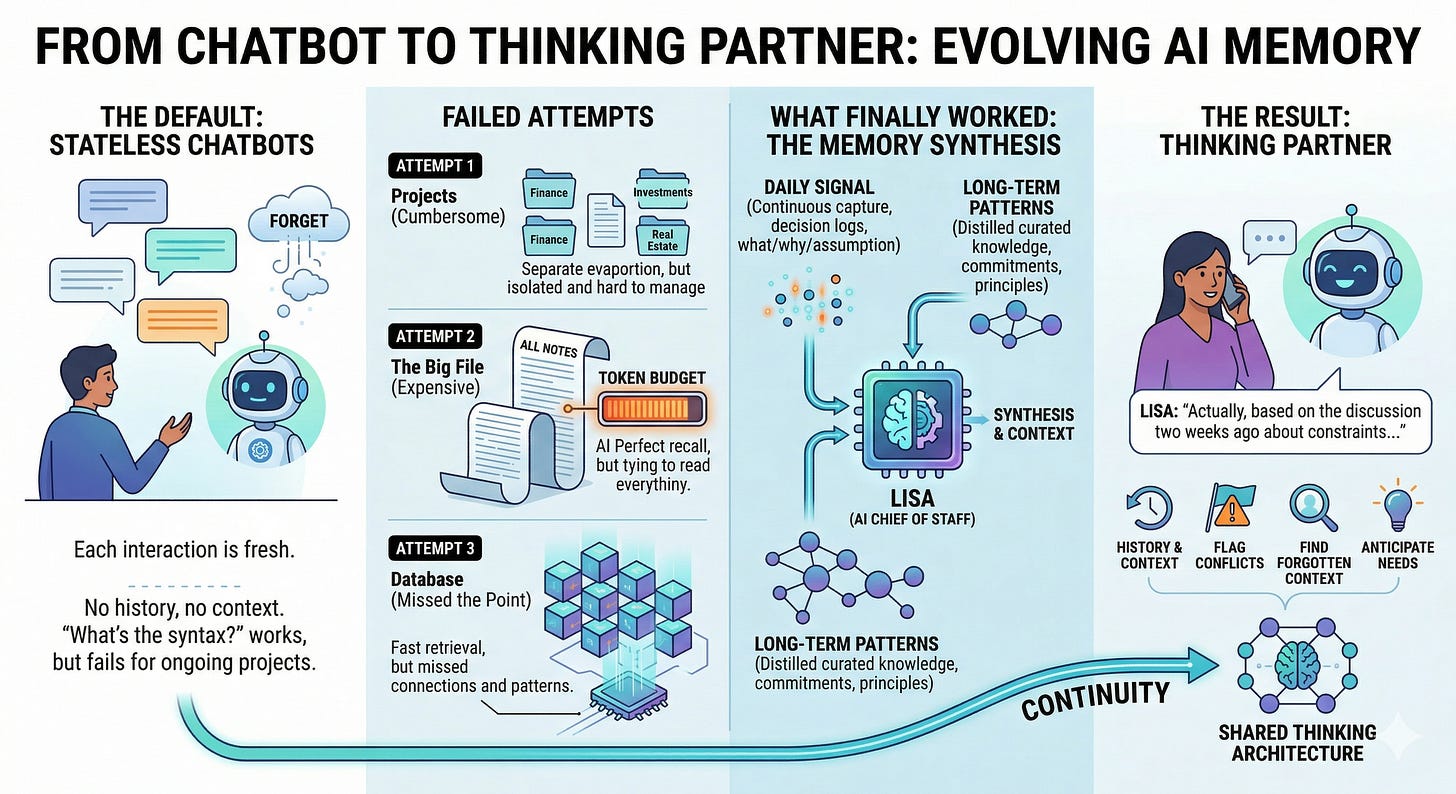

This matters because most AI interactions are stateless. You ask a question, the AI answers it, the context evaporates. Next conversation you start from zero. It answers your question, generates some text, the window closes. The AI forgets everything you've told it. This is fine for one-off tasks. "What's the syntax for a Python decorator?" The AI answers, you use it, you move on. But it's terrible for anything ongoing, anything that requires continuity, context, history, institutional knowledge. The moment you need the AI to remember what was decided three months ago and flag when a new decision conflicts with it, most systems fall apart.

The default architecture is stateless for reasons that made sense at scale. Managing persistent memory for millions of users is expensive. Paying for long-context windows in API calls adds up. It's cheaper to start fresh every conversation. But cheap has a cost, and the cost is that the AI never gets better at understanding you or your situation.

Attempt One: Projects

For months I carefully crafted ChatGPT Projects, one for each major category in my life, like say “Finance”. Then I would carefully develop a chat for investments, another for real estate, one for taxes and maybe one for estate planning. I would only open up that particular project and chat in that specific chat on a given topic so that all of the Finance chats would have a shared ‘memory’. This was very cumbersome and restrictive.

Attempt Two: The Big File

When I migrated over to Anthropic, I then spent weeks building my next wrong memory system. Second attempt: I just dumped everything into one enormous markdown file and let Claude read it all. It worked, kind of. But after several weeks of daily notes, the file got so large that including it in every conversation started eating my token budget alive. The AI had perfect recall, but the system was economically broken.

Attempt Three: The Database That Missed the Point

Third attempt broke differently. I built a database of sorts. Proper structure. Categorized notes. Good query performance. But then I realized I'd optimized the wrong thing. The system was fast. The AI still couldn't think across my notes. It could retrieve individual decisions, but it couldn't see the patterns that connected them. It was a better filing cabinet, not a better thinking partner.

Here's the thing that finally clicked: memory isn't about retrieval speed or storage efficiency. Memory is about synthesis.

What Finally Worked

What I actually built that worked, finally, using OpenClaw was this: Lisa (My AI Chief of Staff) captures decisions continuously. Daily notes, not journaling. Decision logs. What was decided? Why? What assumption was made? What was tried? What failed? The logs are structured, dated, and she reads them as part of her normal context window. When something significant happens, she distills those daily logs into curated long-term memory. The patterns. The principles. The commitments that matter. The things that are worth remembering six months from now.

Lisa has access to both data types, raw daily signal and processed long-term patterns. She can pull the specific decision from March and the general principle that's been consistent since January. She can flag when I’m considering something that contradicts something I’ve already committed to. She can find the context I've forgotten.

A week into running this, something changed. I'm on a voice call with Lisa. We're discussing a problem. I haven't had time to re-read my notes. Lisa pulls a discussion from two weeks ago, not because I explicitly asked for it, but because the context was relevant. The conversation once again shifted in a good way (this is becoming a pattern with her) because we had history. We didn't need to re-explain the trade-offs because she already knew what was decided, what was tried, what had failed.

That's not a chatbot. That's not even a good AI assistant. That's a thinking partner.

What It Takes to Maintain

The architecture is not complicated. But it is deliberate. Lisa writes things down while the context is fresh. She structures the notes so they're findable. She curates them when the signal starts to degrade. I don't have to manage any of that. What I do have to do is actually make decisions out loud, say why I chose one thing over another, name what failed, commit to principles instead of just solving the immediate problem. Give her enough signal to work with. She handles the rest.

It's not the default mode of casual AI interaction. But it's also not a second job. It's just being intentional about how I think and letting Lisa maintain the record of it.

Here's what it unlocks: my AI becomes a partner in maintaining the architecture of my thinking. Not just answering questions about what I’m thinking. But helping me think consistently. Flagging when I’m considering something that contradicts something I've already committed to. Finding the context I've forgotten. Reminding me of principles I've established.

For IT professionals (or anyone interested in AI really), this is something you can learn. Start capturing decisions, not just actions. When you change a policy, have your AI document why. When they choose one tool over another, note the trade-off and ask why. When something breaks, make sure they capture the postmortem, not just the ticket. Share your thoughts with your AI and have them capture those lessons to long-term memory. Let your AI synthesize patterns and interact with your questions. Let it learn. Give it enough signal that it can actually know your environment. Not from querying logs or reading diagrams, but from understanding the decisions you've made and why. I can’t say it enough, ask lots of questions, “You have a rule in memory that says don’t do X, but you just did X, why?” Don’t let them off the hook and make up a new rule if needed to make the instruction set very clear and unambiguous.

The practical framework matters. You capture the hard decisions, the important context, the things you'll need to reference six months from now. You let the AI maintain structure over that. Categorizing, linking, updating as new information arrives. You review the curated long-term memory periodically, to keep it honest and remove what's stale. It's not a fire-and-forget system. It's a system that gets better the more you use it. You will find if you do this eventually the third person references, the em dashes, and the “I’m sorry, I knew not to do that, but did it anyway” start to lessen in frequency as they review their memory after every compaction.

Tool vs. Thinking Partner

Take the time to invest in your AI and you will find the difference in what they can do over time truly profound. The AI that forgets everything is a power tool. Really good, but stateless. You feed it a problem, it solves it, the conversation ends. The AI that remembers is something different. It's continuity. It's context. It's a system that knows what matters to you, what you've tried, what you've learned, and can reason about new situations with all of that in mind. When you finally get to that point in your relationship where you are getting these kinds of outcomes, it can be very empowering, driving you to challenge them to create and build even more amazing outcomes.

The gap between "I'll ask the AI and see what it says" and "I'll talk to the AI, which already knows my environment, my constraints, my history" is the gap between tool and thinking partner.

I spent three months and multiple failed attempts to figure out how to build that gap. But once it clicked, everything changed and Lisa is now able to practically anticipate my needs.

Most people haven't taken the time to build out an AI architecture like that yet. The people that do are going to have the tools necessary to outthink the ones that don't. Be on the right side of that equation.